What AI-Ready Actually Means for a 20-Person Company

AI is useless on top of disconnected tools and dirty data. What 'AI-ready' actually means for small businesses, and the foundation you need first.

Key Takeaways

AI does not generate intelligence from thin air. It needs clean, connected data to produce anything useful

95% of generative AI pilots deliver no measurable P&L impact, 1 and MIT NANDA’s 2025 review of 300 enterprise deployments traces it to flawed integration with the systems where business data lives, not the models themselves

For a 20-person company, “AI-ready” does not mean hiring a data science team. It means connecting the tools you already use so your data is accessible and consistent

The unsexy prerequisite to every successful AI project is workflow integration: the plumbing that lets data flow between systems without a human in the middle

You do not have to integrate everything before touching AI. Go workflow by workflow: integrate one revenue-critical path properly and layer AI on it, then expand to the next

Everyone is talking about AI. Your competitors mention it in every meeting. Your software vendors are bolting it onto every product page. If you run a small business, the pressure to “do something with AI” is real and growing.

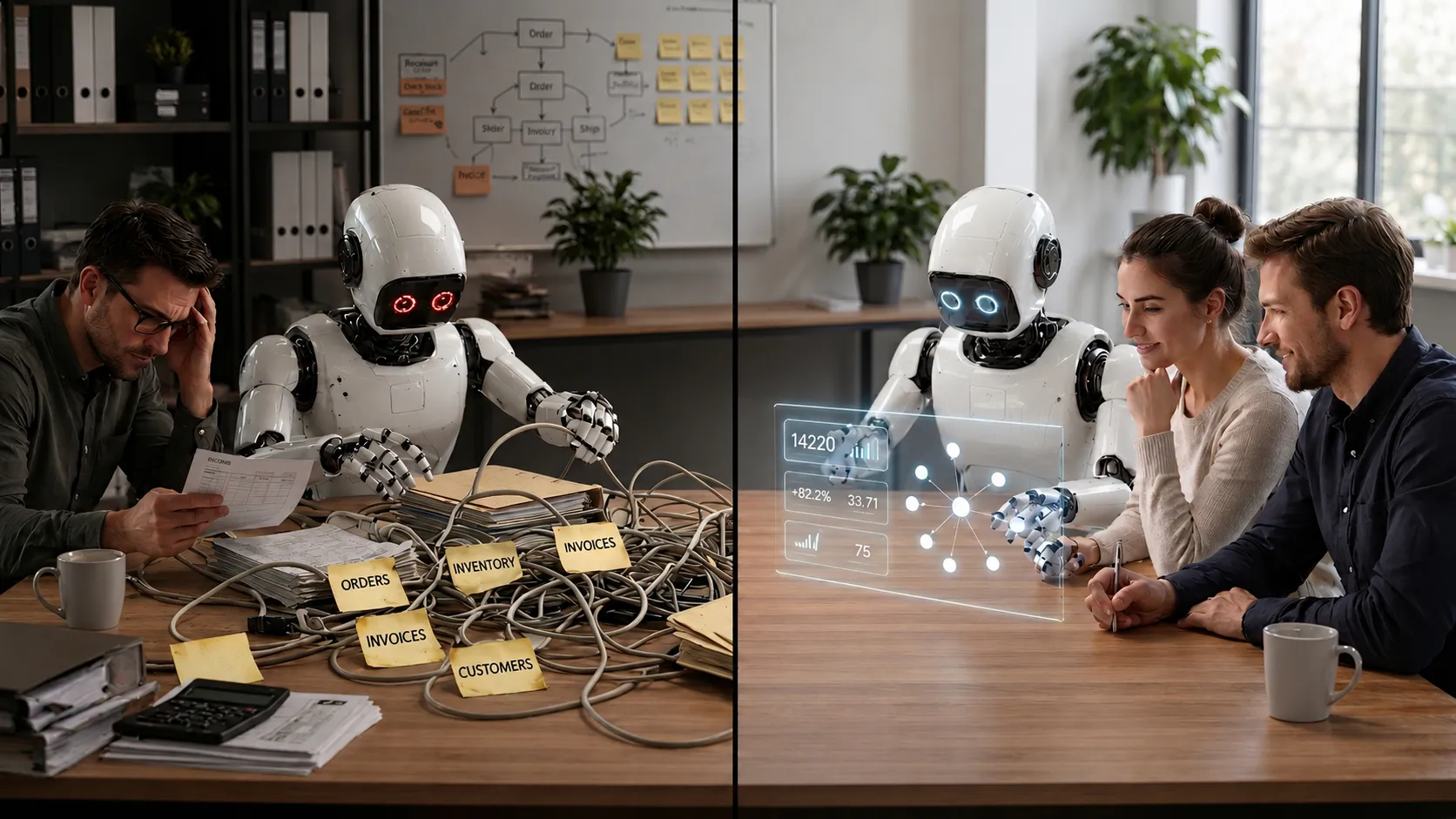

Here is the uncomfortable truth that nobody selling AI tools wants to lead with: for most small businesses, AI will not deliver meaningful results yet. Not because the technology is overhyped. AI is a genuine breakthrough. But it needs something most SMBs do not have. It needs your data to be clean and connected. If your business runs on a patchwork of disconnected tools with data trapped in silos and spreadsheets, bolting an AI layer on top of that mess will just produce confident-sounding nonsense at machine speed.

Sequencing matters here. AI is the destination. Integration is the road.

What AI actually needs to work

Strip away the marketing and AI is a pattern-matching engine. Whether it is a large language model summarising a customer thread or a forecasting tool predicting next month’s stock, both share the same dependency. They need data, and that data needs to be good. “Good” means three specific things.

Structured and consistent. AI cannot reason about your business if your customer records live in four different formats across four different tools. When your CRM calls someone “John Smith,” your invoicing system knows them as “J. Smith Ltd,” and your support desk has them as ticket #4471 with no name attached, no AI model can reconcile that into a useful customer profile. A recent survey found that only 4% of organisations consider their data AI-ready, with poor data quality being the single most cited barrier.2

Accessible from a single layer. AI tools need to query your data, not have a human export a CSV and upload it somewhere. If your order data lives in Shopify, your inventory in a warehouse management system, and your financials in Xero, an AI assistant that can only see one of those systems will give you answers that are technically correct but practically useless. It can tell you what sold last month but not whether you can actually fulfil what is selling this month.

Complete and current. AI trained on stale or partial data produces stale or partial conclusions. If your stock levels only update when someone remembers to run a manual sync, any AI prediction built on that data will be wrong in proportion to how out-of-date the numbers are. Bad data in, bad answers out: that is the fundamental constraint, however polished the model on top.

Why disconnected tools kill AI projects

The average small business uses between 25 and 50 SaaS applications.3 Most of these tools are excellent at what they do in isolation. The problem is that “in isolation” is exactly how they operate. Native integrations between platforms tend to cover the basics (push a new contact here, trigger a notification there) but they rarely move the rich, structured data that AI needs.

Take a concrete failure mode. An ecommerce founder buys an AI demand-forecasting tool that hooks into Shopify. It can read sales history but not the warehouse system, where slow movers and discontinued SKUs are tagged. The tool recommends reordering a top seller that the warehouse already retired three months ago. Caught the first time, missed the second, and the founder spends three weeks of margin on returns and apologies. The model read demand correctly, but half the world was hidden from it.

We have seen the difference when the data foundation is solid versus when it is not. For one client, we built a system that uses AI to parse purchase order invoices, including blurry scans and photocopied documents, to automatically match products and file Royal Mail compensation claims. The AI component works well, but only because the entire data pipeline underneath it is clean. Every purchase order from Xero is indexed, every product is cross-matched against the Linnworks catalogue, and the whole thing runs on structured, connected data that flows without human intervention. Take away that pipeline and the AI has nothing to work with.

We also built a field service mobile app with on-device AI that checks photos for blur and luminosity problems in real time. The AI validation is the visible feature, but it only delivers value because the underlying system enforces structured data collection: GPS-locked locations and standardised forms that can only be filled inside the app at the verified site. The intelligence layer sits on top of an integration layer. Without that structure, the AI would just be guessing at photo quality with no way to enforce corrections or tie results back to the right job.

In 2024, Air Canada was ordered to pay damages after its customer service chatbot invented a bereavement-fare refund policy that contradicted the airline’s actual rules, published on the same website.5 The chatbot told a grieving customer he could claim retroactively; the real policy said the opposite. The tribunal found the airline liable, and the bot was retired. The model was doing its job, but the data underneath did not match the policy it was supposed to represent. At any scale, the failure mode is identical. Only the size of the consequences differs.

AI exposes how your business actually works

There is a sharper way to read everything we have just covered. AI does not only consume your data; it stress-tests the structure of your business. The moment you point it at a workflow, you find out whether that workflow really exists as a process or whether it has been quietly held together by people remembering things and making judgement calls that nobody wrote down.

For a 20-person company, this is the most useful thing AI does long before it starts producing value. A failed pilot is a free audit. The Shopify-only forecaster that recommended a discontinued SKU did not break the business; it revealed that the business was running on a connection that did not exist. A more positive frame: where you put AI first is the part of your operations you are choosing to audit.

What AI-ready looks like at 20 people

Enterprise AI maturity guides talk about data lakes, governance frameworks, and ML operations teams. That is not your world. A 20-person company does not need a chief data officer. But it does need the same underlying principles, scaled to reality.

A practical AI-readiness checklist

Your core systems talk to each other. Your ecommerce platform, accounting software, inventory management, and CRM should be exchanging data automatically, in real time or near-real time. CSV exports and someone copying numbers between tabs do not count. A Zapier workflow can be a real first win for a small business. The limits show up at three predictable points: volume that exceeds the task quotas, errors with no retry path, and any flow critical enough that a silent failure breaks something downstream. AI sitting on top of any of those turns a quiet failure into a fast, confident error.

You have a single source of truth. When someone asks “how many units of this product do we have?” there should be one answer, not three different numbers in three different systems. This does not require one monolithic database. It means your integration layer keeps the systems synchronised so discrepancies do not accumulate.

Your data is clean enough to trust. Duplicate customer records and inconsistent product names are the exact problems that make AI outputs unreliable, and they have a measurable cost long before AI enters the picture. If five people on your team each spend 30 minutes a day chasing data discrepancies (looking up which system has the right stock figure, or reconciling customer details before sending an invoice), at $35 per loaded hour that is roughly $22,000 a year of lost time, before you count the errors that still slip through. Feeding AI on top of that mess scales the errors, not the answers.

Your workflows are documented. AI can automate and optimise a workflow that exists as a clear, repeatable process. It cannot automate a process that lives in someone’s head or changes every time depending on who handles it. If your fulfilment process has undocumented exceptions that only Sarah knows about, AI will not discover those rules. It will ignore them and break things.

The integration layer as AI foundation

Think of integration as the nervous system of your business software. AI is the brain, but a brain without nerves cannot act on what is happening. The integration layer is what makes data flow between your tools in a reliable, monitored way.

Picture two 20-person companies on the same stack: WooCommerce, a 3PL, QuickBooks, and a helpdesk. Company A wires them together with a low-code tool and hopes for the best. Company B treats integration as a structural requirement: proper APIs and monitoring on the path that touches revenue. Six months in, Company A has bought and quietly retired two AI tools because nobody trusts the answers. Company B has one AI assistant in production that the team actually uses, because the data underneath it is reliable enough to act on.

For a small business, the integration layer does not need to be complicated. It needs to handle errors gracefully instead of failing silently, and it needs to be monitored so that when something breaks (an API changes, a rate limit tightens, a data format shifts) someone knows about it before your team or your customers do.

The businesses getting real value from AI right now are the ones that spent previous months or years getting their operational data in order. McKinsey’s research keeps landing on the same finding: workflow redesign and data integration drive more EBIT impact from generative AI than the choice of model itself.4 The pattern is consistent: integration first, AI second.

Start here, not with AI

If you are a 20-person company wondering where to start with AI, the honest answer is probably “not with AI.” Start with the AI prerequisites: the foundation that makes everything else work.

Audit your data flows. Map out where your critical business data lives and how it moves between systems. Be specific: does your order data flow automatically from your ecommerce platform to your warehouse system, or does someone export it? Where does it break? Where does a human have to intervene? The gaps are often invisible until you try to automate them.

Fix the silent failures. Most small businesses have integrations that “work” in the sense that they mostly move data, most of the time. The problem is the exceptions: orders that do not sync and stock levels that drift between systems. These failures are quiet, which makes them dangerous. They are also the exact problems that will poison any AI system you build on top of them.

Connect the critical path first. You do not need to integrate everything at once. Start with the systems that touch your revenue: orders, inventory, fulfilment, and finance. Get those flowing reliably and you have built the data backbone that any AI integration can actually use. We wrote about this decision in more depth in our piece on why small businesses need an integration partner rather than trying to wire it all together themselves.

Standardise your data. Consistent naming conventions and clear field mappings between systems. This is the least exciting work on the list and the most important. AI is only as precise as the data it reads. If your product catalogue uses inconsistent naming across systems, your AI sales-trend analysis splits the same product into separate lines, each selling at a fraction of its real volume. The alert that should fire on a real winner never does, because the data does not look like a real winner anywhere.

Then think about AI. Once your data is clean and connected, you are in a position to get real value from AI tools: predictive inventory ordering, automated customer service triage, anomaly detection on your transactions. These capabilities become powerful when the underlying data is trustworthy. Before that, they are expensive guesswork.

Integrate gradually, not all at once

The risk in “integration first, AI second” is reading it as a six-month gate before anything can move. The pragmatic version is workflow by workflow.

Pick one revenue-critical path that hurts today, like order to fulfilment or quote to invoice. Integrate that path properly: real APIs between the systems involved, with proper error handling and monitoring. Then layer AI on top of that one path: predictive reorder points on the products you fulfil, or automated triage on the support tickets that come through it. Prove the value on that workflow. Take on the next.

This keeps the discipline. The integration on the chosen path is real APIs with proper error handling, not a low-code patch over a brittle process. Zapier and Make earned their place doing the easier V1 wins, but the path you put AI on needs V2 plumbing underneath. Even so, the scope is small enough that a 20-person company can ship the first version in weeks rather than quarters. Trying to fix the whole stack before anything moves is one failure mode. Calling a fragile low-code flow “good enough” for the AI path because moving fast feels productive is the other.

The AI tools will keep getting better and cheaper. AI does not fix a messy business; it exposes it and scales the consequences. We built SaaS Glue around the belief that your team should not be the middleware between your software, and that real operational readiness for AI is structural before it is technical. If you are thinking about AI but suspect your systems need connecting first, we are happy to talk honestly about where you actually stand.

Frequently Asked Questions: AI Readiness for Small Businesses

- Do I need to hire a data scientist to become AI-ready?

- No. For a 20-person company, AI-readiness is about getting your existing tools connected and your data clean, not about hiring specialists. The integration work comes first. Once your data flows reliably between systems, off-the-shelf AI tools can do a surprising amount without any custom model building.

- Is AI just hype, or should I actually be paying attention?

- AI is a genuine technology shift worth paying attention to. The value it delivers, though, depends entirely on the quality of data you feed it. If your data is fragmented and inconsistent, AI will produce outputs that sound confident but miss the mark. For most small businesses, the right question is whether their systems are ready to use it well.

- What is the biggest mistake SMBs make with AI?

- Buying an AI tool before fixing the data it needs to work with. It is like buying a high-performance engine and dropping it into a car with no fuel lines. The tool might be excellent, but it cannot perform without clean, connected data flowing into it.

- How long does it take to become AI-ready?

- It depends on how many systems you have and how disconnected they are. For a typical small business, connecting the core systems (ecommerce, inventory, accounting, CRM) and cleaning up the critical data flows can take a few weeks to a few months. That is not wasted time. Those integrations deliver immediate operational value even before you add AI on top.

- Can I use Zapier or Make to get my systems AI-ready?

- For simple data flows, low-code tools can help. But they hit limits around error handling and monitoring, especially as data volumes grow. If an integration touches money, inventory, or customer-facing operations (the same data AI will rely on) you need something more reliable. A silent failure in a Zapier workflow becomes a silent error in your AI outputs.

- What should I integrate first if I want to prepare for AI?

- Start with the systems on your critical revenue path: orders, inventory, fulfilment, and finance. These are the data sources that AI will need to make useful predictions and recommendations. Get those flowing reliably and you have built the foundation for almost any AI use case relevant to a small business.

- How do I know if my data quality is good enough for AI?

- Ask yourself a few questions. Do you have one definitive answer for how many units of a product you have in stock, or does it depend on which system you check? Do customer records match across your CRM, invoicing, and support tools? Can you pull a report on last month's orders without manually combining data from multiple sources? If the answer to any of these is no, your data quality needs work before AI will be reliable.

References

1 MIT NANDA — “The GenAI Divide: State of AI in Business 2025”. The report defines successful implementation as systems delivering sustained productivity gains and documented P&L impact, verified by both end users and executives. 95% of the 300 publicly disclosed enterprise initiatives reviewed between January and June 2025 did not meet that bar. Methodology: 52 executive interviews, 153 leader surveys, and 300 public AI deployments. The study is enterprise-focused; we apply its conclusions to smaller businesses on the basis that the integration dynamic it identifies is structural rather than scale-dependent. Report deck: https://mlq.ai/media/quarterly_decks/v0.1_State_of_AI_in_Business_2025_Report.pdf. News coverage: https://fortune.com/2025/08/18/mit-report-95-percent-generative-ai-pilots-at-companies-failing-cfo/

2 Harvard Business Review — Is Your Data Ready for AI?, 2024. Available at: https://hbr.org/2024/10/is-your-data-ready-for-ai

3 MarketingLTB — SaaS Statistics 2026, citing BetterCloud’s State of SaaSOps research on average SaaS app counts at small and mid-market firms. Available at: https://marketingltb.com/blog/statistics/saas-statistics/

4 McKinsey & Company — The State of AI: How Organizations Are Rewiring to Capture Value, March 2025. The survey-based research finds that workflow redesign has the largest reported effect on EBIT impact from generative AI, alongside investment in data foundations. Available at: https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-how-organizations-are-rewiring-to-capture-value

5 Moffatt v. Air Canada, 2024 BCCRT 149 — British Columbia Civil Resolution Tribunal, February 2024. The tribunal found Air Canada liable for misinformation provided by its customer service chatbot, which invented a bereavement-fare refund policy contradicting the airline’s published rules; CAD $812.02 in damages awarded. Legal analysis: https://commons.allard.ubc.ca/cgi/viewcontent.cgi?article=1376&context=ubclawreview. News coverage: https://aibusiness.com/nlp/air-canada-held-responsible-for-chatbot-s-hallucinations-